- Blog

- About

- Contact

- Amazon cloud drive for mac

- Bigg boss 10 watch online 21st november 2016

- Microsoft flight simulator 2017 download

- Gamma iptv player download

- Run microsoft sql server on mac using docker

- Super smash bros lightsaber sound effect

- Inuyasha season 3 episode 1 kissanime

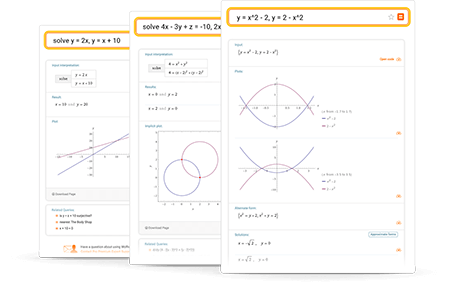

- Large system of equations solver

- Vst plugins bundle

I'm currently testing different things on a smaller scale (with N=400 compared to 900 in the full problem). Re: A follow-up question on the smaller problem vs full-size This is of course not too scientific either because that would require another validation set, but that is something I can live with for now. It already appears that the nuclear norm is boosting performance out-of-sample, but still not enough to beat the identity matrix. I'm going through the most obvious choices right now to test performance. Very good point to try out different solvers. Another problem with this strategy is that I do not have that much data, so splitting the data while still having to solve for d parameters (in the case I enforce R to be diagonal) is problematic (related to my last point, see bottom). The thing is that I would need to tune lamb by cross-validation, but if the problem gets formulated in an efficient way, it might not be a problem. This is a very good idea! I don't have too much experience in reformulating problems like this one, but that's definitely something to think about. The above is running within acceptable time, but it does frighten me to implement some kind of cross-validation. I'm running on a MacBook Pro, M1 (2020) with 8 cores and 16 GB ram. I've changed the Parameter objects to plain old constants, thanks! I originally adopted the Parameter objects in the case I needed to re-run the solver to tune a penalty hyperparameter by cross-validation but that seems a little infeasible at the moment.

Let me address each of your comments one-by-one. R_opt = finetuner.solve(E=E, Y=Y, Thank you so much for taking the time to look at my problem in this level of details-highly appreciated! (verbose=self.verbose, solver=self.solver, ignore_dpp=True) Self.problem = cp.Problem(objective=self.Q) Self.L = self.main_loss + self.regularization_loss Self.regularization_loss = cp.multiply(penalty, cp.norm(self.R, p=self.NORM)**2) Self.main_loss = cp.norm(x=self.Y - E self.R self.A.T, p=self.NORM)**2 Self.R = cp.Variable(shape=(self.d,self.d),ĭef solve(self,E,Y=None,A=None,penalty=0): Self.A = cp.Parameter(shape=(self.M, self.d), name="A") Self.Y = cp.Parameter(shape=(self.N, self.M), name="Y") It should run off-the-shelf but takes 5-7 minutes to complete.

#Large system of equations solver how to#

Question: Any inputs on how to solve this optimization problem? R = argmin ||Y - E*R*A'||_F^2 + lambda*||R||_F^2, where lambda is some scalar penalty.Īgain, this is easily implemented in cvxpy, but it takes forever to run and I end up canceling the program before completion. My second attempt was to add an explicit penalty term, similar to: It is still better to use the identity matrix as R. However, it turns out that this regularization is not enough. This is easily done in cvxpy (thank you once again!). My first attempt to regularize R was to enforce it to be diagonal, essentially reducing the variables from d^2 to d. But the embeddings are unsupervised in the sense that they are raw embeddings some BERT-like model, and now I want to finetune (rotate) these embeddings to the space of Y. However, it turns out that without any further structure R estimated this way greatly overfits, and using unseen data it is much better to just an identity matrix as R (because E and A represent embeddings, and the cosine similarity should resemble Y. My initial objective is to minimize the squared Frobenius norm: Now, consider a (d x d)-dim decision matrix R. Hi cvxpy maintainers and contributors! As always, I want to start out by highlighting how awesome work you're doing with this module-love it!įor the question.